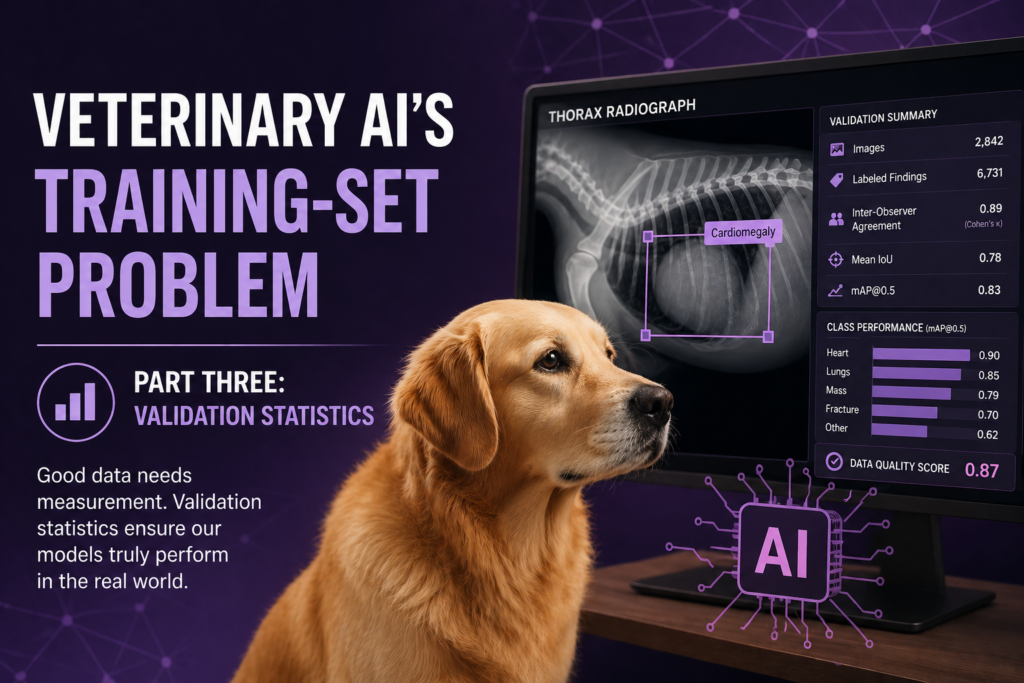

Veterinary AI’s Training-Set Problem — Part Three: The Validation Statistics

“The first two parts of this investigation calculated the labor required to produce the training corpora claimed by SignalPET, Vetology, and Antech RapidRead, and demonstrated that the math does not work — at the simplest annotation step, at the bounding-box step, at the segmentation step, and against the structural infrastructure veterinary medicine has not built. This article closes the series by addressing what happens after training is supposedly complete: what the products are required to demonstrate, what they actually demonstrate, and the corporate revenue model that explains why a category of medical-decision-support software exists that operates entirely outside the validation framework that constrains its human-medicine equivalent. The two halves of this article are different in tone — the first half is technical and statistical, the second half is structural and economic — but they answer the same question: why is the foundational accuracy claim of commercial veterinary AI radiology software so consistently weak, and so consistently absent from the kind of independent verification the human-side AI category requires as a precondition of going to market?”