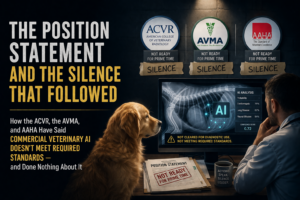

Vet AI Position Statement: 18 Months of Institutional Silence

“In March 2025, the American College of Veterinary Radiology and the European College of Veterinary Diagnostic Imaging published a joint position statement in JAVMA establishing that commercial veterinary AI radiology products do not currently meet the standards required for safe deployment in clinical practice. The position statement was the formal, peer-reviewed expression of the field’s specialty college finding that an entire commercial product category fails the threshold for clinical use. In the eighteen months since, the institutions positioned to act on the position statement’s findings have not done so. The ACVR has continued to host the same AI vendors as official conference partners. The AVMA has issued no policy resolution and modified no corporate-relationship framework. AAHA, the only voluntary accrediting body for companion-animal veterinary hospitals in the United States and Canada, has completed the first comprehensive Standards of Accreditation refresh in its 90-year history without adding any standard that would constrain commercial AI radiology products. The institutional inaction is consistent across all three institutions, occurring in the same eighteen-month window, with the same documented professional notice, and with the same documented corporate sponsorship architecture connecting each institution to the corporate parents of the AI vendors at issue. This article documents what was said, what was not done, and why the structural pattern of inaction is explicable by examining how veterinary professional self-regulation is funded and organized.”